Using Phoenix Channels? High Memory Usage? Save Money With ERL_FULLSWEEP_AFTER - Phoenix Series

Phoenix Channels are amazing. They make using WebSocket connections a breeze. They are even more lovely using them with Absinthe, a GraphQL server implementation, and GraphQL Connections.

But over time, I discovered Phoenix Channels has a downside. If you use long-lived connections, memory usage on your server will remain unusually high.

How I Use Phoenix Channels

Now for a bit of background on how I use Phoenix Channels. My Stream Closed Captioner application is a free Closed Caption service I offer for Twitch streamers and Zoom meetings. Users log into a dashboard on the website and turn on Speech-to-text. Then a Phoenix Channel sends Text from the front end to the back end, where it goes through a couple of filters like profanity filtering, among other things.

If a Twitch streamer is using the service, it will broadcast the resulting text over another Phoenix Channel for that user's viewers to receive. If they use Zoom, it will send an HTTP request to the Zoom meeting's Closed Caption endpoint.

On the Twitch viewer side, the app communicates to the server via GraphQL. When a Twitch streamer goes live, the viewer client will initialize a GraphQL Connection (aka WebSocket/Phoenix Channel) to receive broadcaster captions from the server.

In other words, each streamer will have a connection to send captions to the backend. Each viewer is another connection that lasts for the live broadcast. For example, if a live stream has 200 viewers, that makes for 200 + 1 connections.

What Does That Mean For Memory Consumption?

Typically, Twitch live streams last at least 3 hours, and I quickly discovered these connections can take up a lot of memory. 6-7 gigabytes of memory to support the number of connections necessary for all streams... YIKES

But why? I spent a little time with my nose in some books and found this passage in

Real-Time Phoenix:

Short-Life Versus Long-Life Processes

Real-time applications rely on long-lived processes in order to have a direct connection to clients—this allows them to send data immediately when the data is available. There is a downside to this, though. It’s possible that processes get stuck in a state where garbage collection doesn’t occur, but memory isn’t being used. This can cause large memory bloat when amplified across thousands of processes.

If a piece of memory makes it past generational garbage collection, it lives until a full-sweep garbage collection occurs. This happens, by default, every 65535 generational passes or when the process is close to using its available memory. It’s possible for a Channel, Socket, or other long-lived process to get stuck in a state where there is plenty of free memory, but not enough work to trigger a generational pass. A process in this state will live without memory being collected, and it will potentially take up more memory than necessary.

Processes that are created and terminated quickly (short-lived processes) do not get into this state because their memory is reclaimed when the process is terminated. While all applications use long-life processes to some degree, real-time apps use them in the thousands. This amplifies the impact of memory bloat.

You can fix this problem by forcing a full-sweep garbage collection to occur...

Why memory consumption is so high now makes a lot more sense. Every connection that is being initialized is long-lived, and the garage collector won't clean up the memory of these processes until 65k garbage collection sweeps have happened. Now what?

But There Is A Solution!

Luckily, Real-Time Phoenix also recommends a few things you can do to force garbage collection sooner.

One such setting is ERL_FULLSWEEP_AFTER.

Understanding ERL_FULLSWEEP_AFTER

ERL_FULLSWEEP_AFTER is a setting related to the Erlang Virtual Machine’s (VM) garbage collection mechanism. It allows you to specify the maximum number of generational collections before forcing a full-sweep, a type of garbage collection that cleans up memory that is no longer in use. By default, this is set to 65535.

ERL_FULLSWEEP_AFTER 20

Real-Time Phoenix author mentioned that they had success setting this value to 20 for their application, so this is what I set my environment variable, but it can be any value that works for you.

The Result!

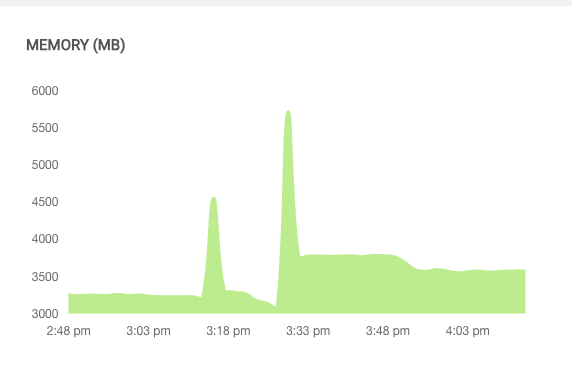

Within minutes of setting the ERL_FULLSWEEP_AFTER environment variable to 20, I saw results in my Gigalixir memory consumption dashboard. I went from using 6 gigabytes of memory down to around 3.5 gigabytes in a dramatic fashion. Unfortunately, I forgot to snap a screenshot of the dashboard, but the screenshots below still show the updated setting at work.

The above image shows memory consumption after two deployments. You can see the memory consumption jump up to my historical average of around 6 gigabytes after all existing users on the application reconnect to the new instance. After the 20 minor garbage collections kick in, the major garbage collector is triggered, and you see a significant drop.

In this image, those spikes are Twitch streamers going live and connections for all their viewers being made. You can quickly see how much memory connections can take up. But after 20 minor garbage collections, the major garbage collector starts cleaning things up, and you see a significant drop. Amazing! With one simple setting, I solved my memory consumption woes without issues.

Conclusion

If you're building a real-time application using Phoenix Channels, it's essential to be mindful of memory usage, especially if you're dealing with long-lived connections. While Phoenix Channels are fantastic for using WebSocket connections, they can cause memory bloat when used in thousands of processes. However, with a bit of knowledge and tweaking of the ERL_FULLSWEEP_AFTER setting, you can force garbage collection sooner and dramatically reduce memory consumption. So, if you're experiencing a similar issue with your Phoenix Channels application, give this solution a try and see the results for yourself.

I would also like to make a plug for Real-Time Phoenix by Stephen Bussey. It's an awesome book, and you can find the passage referenced in the chapter Manage Your Application’s Memory Effectively, along with more goodies.

Happy coding!